My Frigate surveillance setup’s primary purpose is to keep an eye on my cats while I’m away.

An older setup supported only motion detection, but reviewing all motion events of the day while on vacation to make sure that each cat is doing fine was somewhat cumbersome. I switched to Frigate to leverage its entity detection capabilities so I only have to review events that detected cats, and could skip random motion.

That still turned out to be quite cumbersome, because the two 15-year-olds move little, whereas the 3-year-old moves a lot, so I still had to review lots of events to make sure all cats are alive and well. There are also issues with cats sometimes being detected as people, or not detected at all, but some parameter tuning made that good enough.

Frigate 0.17🔗

The most recent Frigate release introduced a built-in object classification training tool!

Each object that’s already tracked can be extended with one or more classifiers, and the result of the classifier is added as attribute or sub-label to the detection event. My config looks like this:

objects:

track:

- person

- cat

filters:

person:

min_area: 1000 # Adjust based on your camera views

threshold: 0.8 # Lower threshold for better detection

min_score: 0.5

cat:

min_area: 500 # Smaller than person since cats are smaller

threshold: 0.6 # Slightly lower for cats (harder to detect)

min_score: 0.45

classification:

custom:

cat:

threshold: 0.8

object_config:

objects: # object labels to classify

- cat

classification_type: sub_label # or: attribute

enabled: true

name: catThe objects section existed before - I just had to add the classification section to enable classifier training, which unlocks the classification UI:

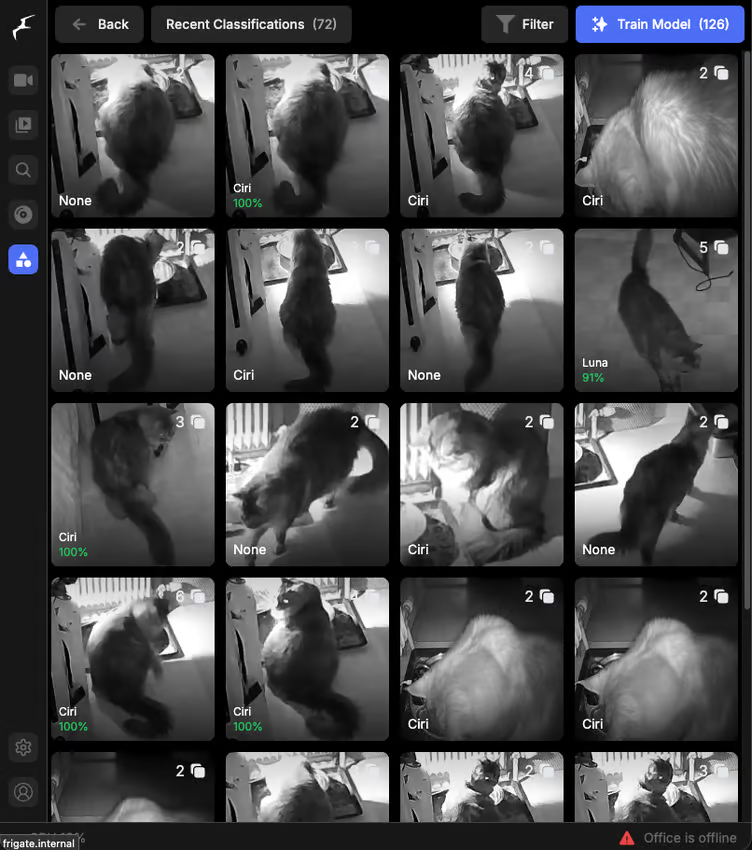

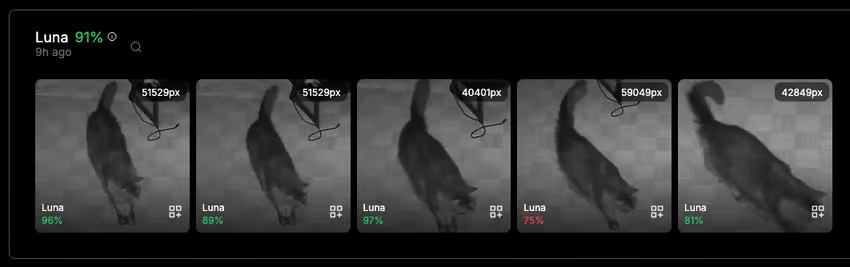

Each tile corresponds to a detection event of one of the objects listed in the config. Clicking it shows you all the snapshots from the detection event and allows you to label each:

I ran training before making the screenshot above, so the classifier already has some opinions on the cat’s identity. Clicking the icon on the sample allows me to label it, and if I need more context, I can look at the whole event as well.

How well does it work?🔗

The results so far are mixed.

I already labeled hundreds of samples, but the classifier still spits up plenty of false positives. Why is that?

At night, all cats are black🔗

Some cats are really hard to tell apart, especially through infrared-illuminated night vision.

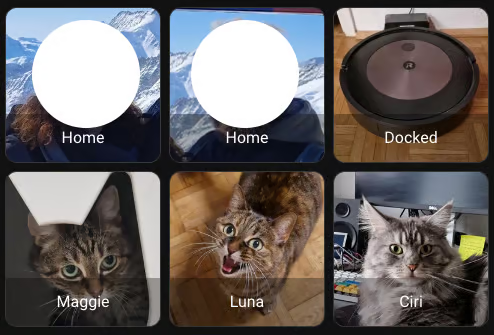

Here are pictures of all three cats in similar poses - can you tell the differences?

Meet Maggie, Luna, and Ciri (in order of descending age).

Maggie and Luna are some kind of European short hair mix, which look very similar. In a color image, you’d be able to tell them apart by Maggie being more grey-ish, and Luna being more brown-ish, but in night vision, there is barely a difference. When I try to tell them apart during classification, I look at the tail (Luna’s is more fluffy) and the paws (3 of Maggie’s are white-tipped). Otherwise, I wouldn’t be able to tell them apart myself, despite living with them for years.

Ciri is easy - she’s a huge, grey Maine Coon/Ragdoll mix with long hair and a very distinctive tail.

So far, the classification model hasn’t picked up on the subtle differences between Maggie and Luna yet, and classifies most samples as Ciri. It’s getting better with each round of training, though, so I have some hopes.

What’s next?🔗

There is still plenty of compute capacity left on my Frigate machine, so I’m thinking about running detection and classification at full camera resolution instead of preview resolution. I’ll also continue labeling training data.

Certainly not giving up yet. Maybe, one day, my Home Assistant dashboard will be able to tell whether each cat is on the couch, hydrating, or taking a dump.

Other use cases🔗

Frigate’s new classification feature can not only be used to detect invididuals, but also for state recognition, e.g. whether a gate is open or closed.

Detecting whether the cats’ water fountain is working properly is actually a need that I have that I previously tried to cover by measuring the pump’s electricity use, but differences were so minor that this kind of monitoring didn’t work reliably.

So, water flow detection could be interesting as well, but is harder to generate training data for, because I’d have to disrupt the cats’ water supply intentionally. In the end, living being’s needs take precedence over the urge to tinker.